I have been building the data protection software for the datacenter and public cloud and i have explored multiple backup products.

I am adding my thoughts based on the challenges i faced, based on my interaction with my customers, based on reading other best practise guides.

Data Protection Challenges

Backup Sizing

Assume you have a datacenter which has few ESXi servers and multiple virtual machines. One of the biggest challenge is sizing the backup.

To get the right numbers first you need to calculate the size of a full backup and how often you will take the full backup and how long you will retain

Say, you have 10 virtual machines of 200G and the full backup after compression and deduplication comes around 1.5 TB and you want to take once in every week and retain for 3 months. Then you approximately need 20 TB of storage size

Next you will also need to estimate the incremental backup and log backup if any.

Typically the incremental backup is the data change after the full backup and the size depends on the type of application you are running. Typically it will be around 1-15 % of your full backup if you take it every day.

And to calculate the throughput required for the backup media, you need to come up with the backup window and it has a trade-off.

This calculation will also help to determine the required throughput of the backup server and the storage system.

And also if you want to follow the 3-2-1 backup strategy, the same calculation is required how much you want to keep in offsite and how frequently. Read about the 3-2-1 Backup strategy here

These calculations are bit complex if you have unpredictable workloads. It is complicated and full of unknowns, but properly designing the backup system scaling will help you to meet the Backup SLA and avoid wasting the money on excess compute and IO capacity

Patching the Backup Software

Ransomware attack are happening on Backup targets, the days are gone where you can ignore the critical security patches of the Backup software’s operating system.

If you have Veeam installed on your data center, it will be installed as a service on your windows operating system. you have the control of the operating system hotfix and security patches but your backup vendor is giving you an appliance and restricted terminal access to the appliance, you have to depend on your vendor to update the operating system.

Clumio claims backup as a service where you don’t need to worry about updating the software, I still need to explore how it works behind the screen and what technology they are using.

Other Challenges

There are other challenges than the above-mentioned challenges like Hardware requirement, Software requirements, license cost and renewal, Multiple players, choosing the right tool based on your environment if fully virtualized, or running bare metals, Tapes for archiving, Hyper-Converge

Solution to the Challenges

Modern data protection software like Druva, Clumio, Veeam are trying to solve the problems and reduce the complexity in the Data protection and Disaster recovery

People are moving away from tape and store the data in the cloud for the archival, Copy to S3 then to Glacier for the long term archival

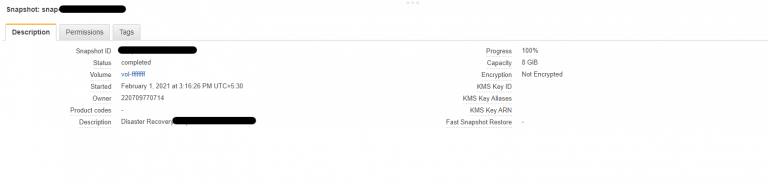

Many companies responded with the Disk-based backup products targeting specifically the virtualized environment. snapshotting the primary volume for short term backup and replicate to the secondary node to protect from disaster.

Hyper-Converge backup products reduce the complexity of the backup, this makes the solution simpler as they are reducing the number of vendors and products customer need to maintain in their datacenter

All these solutions are designed to run in datacenter, just lift and shift the on-premise solution is not optimal for the cloud.

For the cloud data protection the perspective of approaching the problem should be different.

The backup system sizing should be dynamic in the cloud, instead of provisioning all the resources upfront, the system should able to dynamically scale up and down based on the customer needs

The Operating system patching should happen on the fly, the latest instance from the AMI should be provisioned and the session should be redirected to the new instance and whenever scale-up is happening new Instance of the Scaling group should be from the new AMI.

Instead of Tape for archival, cloud solution should use Object store for the long time archival.

Also published on Medium.